Multimodal Foundation Models (MFMs) are essentially powerful, pre-trained AI systems that can understand and process information from different types of data – like text, images, audio, and even video – all at once. For businesses, this means being able to analyze complex datasets in a much more holistic and insightful way than before. Imagine a customer feedback system that not only reads what customers type but also “sees” their product photos and “hears” their voice recordings. That’s the power MFMs bring to enterprise data analysis.

Think of a foundation model as a very smart student who’s read countless books, seen endless pictures, and heard countless conversations. They’ve built up a general understanding of the world, making them incredibly versatile. Now, imagine that student isn’t limited to just reading, but can also understand images, listen to audio, and watch videos – all at the same time. That’s a multimodal foundation model.

Beyond Single-Sense AI

Traditionally, AI models have been built for specific data types.

You had natural language processing (NLP) models for text, computer vision (CV) models for images, and audio processing models for sound.

These worked well in their silos, but the real world isn’t siloed. Information rarely comes in neat, single-data packages.

The Power of Integrated Understanding

MFMs bridge these gaps. They learn to find connections and relationships across different data modalities. This integrated understanding is crucial because often, the most valuable insights lie in the interplay between various data points. A picture without a caption might be ambiguous, but a picture with a caption and an accompanying voice recording of someone describing it becomes far more meaningful.

In the rapidly evolving landscape of data analysis, the integration of multimodal foundation models is becoming increasingly crucial for enterprises seeking to leverage diverse data sources. A related article that explores the broader implications of technology trends, including those relevant to data analysis and machine learning, can be found at Top Trends on YouTube 2023. This article highlights how advancements in technology are shaping various industries, providing insights that can be beneficial for organizations looking to implement multimodal approaches in their data strategies.

Key Takeaways

- Clear communication is essential for effective teamwork

- Active listening is crucial for understanding team members’ perspectives

- Setting clear goals and expectations helps to keep the team focused

- Regular feedback and open communication can help address any issues early on

- Celebrating achievements and milestones can boost team morale and motivation

Why Should Enterprises Care About MFMs?

Simply put, enterprises deal with messy, multimodal data every single day. Customer interactions aren’t just emails; they’re calls, social media posts with images, and product reviews with attached videos. Internal operational data isn’t just spreadsheets; it’s also sensor readings, security camera footage, and engineering schematics. MFMs offer a pathway to unlock deeper, more contextual insights from this rich tapestry of information.

Unlocking Deeper Customer Insights

Let’s consider customer feedback. Previously, an enterprise might analyze text reviews for sentiment, or manually tag themes in image submissions. With an MFM, you can combine these.

Analyzing Customer Journeys Holistically

Imagine a customer service interaction. An MFM could analyze the transcript of a call (text), look at screenshots the customer sent (image), and even detect frustration in their voice (audio). This combined analysis provides a much richer understanding of their problem and their emotional state than any single modality could reveal. It allows for more empathetic and effective customer support.

Enhanced Product Feedback Analysis

Customers often share product feedback with photos or videos. An MFM can not only understand the textual description of a bug but also “see” the bug in the accompanying image or video. This helps product teams quickly pinpoint issues and prioritize fixes based on visual evidence and reported sentiment.

Streamlining Operations and Decision-Making

Enterprise operations are rife with opportunities for MFM deployment. From manufacturing to logistics, the ability to process diverse data types can lead to significant efficiency gains and better strategic choices.

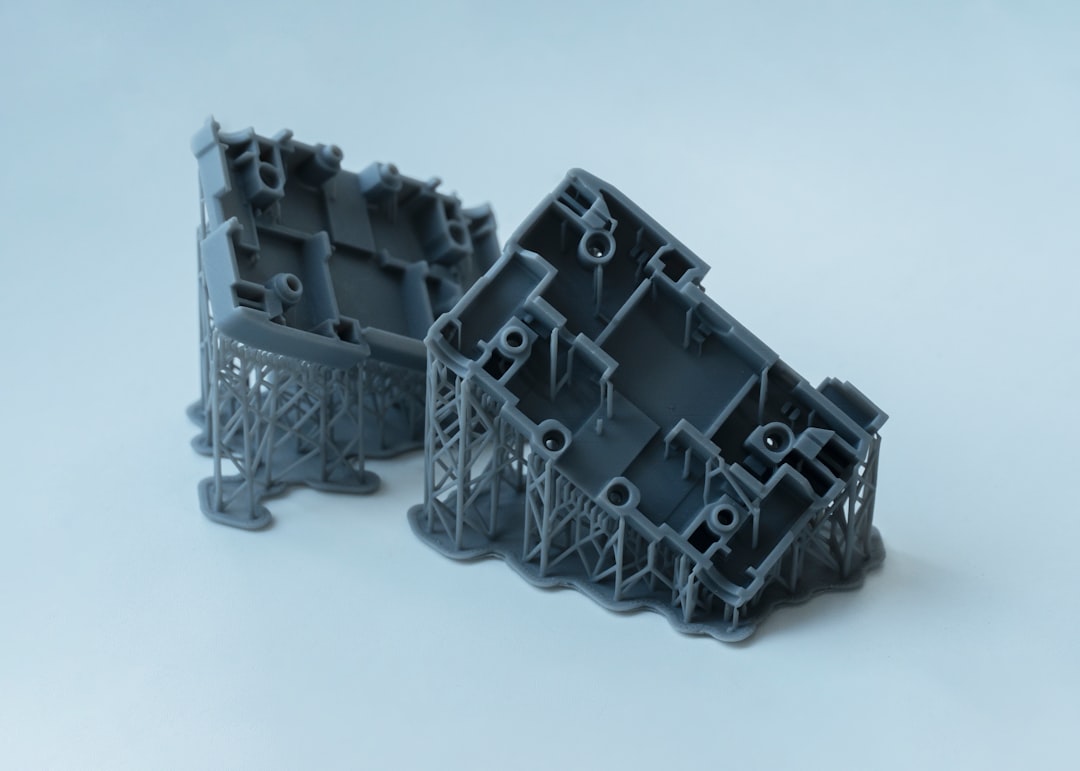

Predictive Maintenance with Multimodal Data

In manufacturing, sensor data (numerical), machine logs (text), and even camera feeds of equipment (image/video) all provide clues about potential failures. An MFM can correlate these diverse inputs to predict maintenance needs more accurately, reducing downtime and costs compared to models relying on single data streams.

Supply Chain Optimization

Logistics involve tracking shipments, weather patterns, traffic data, and warehouse inventory levels.

An MFM can fuse all this information – text-based alerts, satellite images of weather, numerical sensor data from containers – to optimize routes, predict delays, and manage inventory more effectively.

Practical Applications of MFMs in Enterprise Data Analysis

The real magic happens when these theoretical capabilities are applied to concrete business problems. Here are some areas where MFMs are already making a difference or show immense promise.

Enhanced Search and Information Retrieval

Finding specific information within vast enterprise data archives can be a huge headache. MFMs can revolutionize this.

Beyond Keyword Search

Instead of just searching for keywords in documents, imagine searching for “a picture of our new product experiencing a scratch on its casing” and having the MFM surface relevant images, videos, and associated text descriptions, even if the exact keywords aren’t present.

It understands the concept across modalities.

Cross-Modal Data Linking

An employee might query “show me all customer complaints related to the red button on product X.” An MFM could pull up not only text-based complaints but also videos where customers point to the button, or images showing issues near it, creating a more comprehensive picture.

Automated Content Generation and Moderation

Enterprises produce and consume enormous amounts of content. MFMs can assist in both creating and monitoring this content.

Smart Content Summarization and Generation

Imagine an MFM that can analyze a technical document (text), accompanying diagrams (image), and design meeting recordings (audio) to generate a concise summary for marketing or even draft initial versions of user manuals. It learns to represent complex information in a simplified, accessible way for different audiences.

Intelligent Content Moderation

Social media platforms and internal communication channels often struggle with content moderation. MFMs can identify problematic content that combines images, text, and even audio.

For example, a picture with hidden symbols, accompanied by seemingly innocuous text, might be flagged as inappropriate by an MFM that understands the subtle context, whereas a text-only or image-only model might miss it.

Personalization and Recommendation Engines

MFMs can take personalization to a whole new level by understanding user preferences across various interaction points.

Richer User Profiles

Instead of just knowing what articles a user reads, an MFM can understand what types of images they engage with, what style of videos they watch, and even infer their preferences from their voice commands. This allows for a more nuanced and accurate user profile.

Cross-Modal Recommendations

An MFM could recommend not just articles to read, but also videos to watch, products to buy, or even courses to take, all based on a holistic understanding of a user’s past interactions and stated preferences across different data types. For example, if a user frequently views images of nature and searches for hiking gear, the MFM could recommend nature documentaries or local hiking trails.

Challenges and Considerations for Enterprise Adoption

While the potential of MFMs is exciting, implementing them in an enterprise setting isn’t without its hurdles. It’s important to go into this with open eyes.

Data Preparation and Integration Complexity

MFMs thrive on diverse data, but bringing that data together in a usable format is a significant task.

Handling Data Variety and Volume

Enterprises often have data scattered across numerous systems, in different formats, and with varying levels of quality. Integrating text from CRM, images from product databases, and audio from call centers into a unified system for MFM training requires robust data engineering pipelines. The sheer volume can also be overwhelming.

Annotation and Labeling Efforts

While MFMs are pre-trained, fine-tuning them for specific enterprise tasks often requires labeled data. Getting consistent, high-quality labels across multiple modalities (e.g., segmenting objects in images and transcribing relevant parts of audio) is a labor-intensive and costly process.

Computational Resources and Cost

MFMs are massive models, and their training and inference demand substantial computational power.

Infrastructure Requirements

Running and fine-tuning MFMs typically requires powerful GPUs and significant cloud computing resources. Enterprises need to assess their existing infrastructure and budget for these advanced computing needs. This isn’t a small server in the corner.

Energy Consumption

The environmental impact of large AI models is a growing concern. Enterprises adopting MFMs should be mindful of their energy footprint and explore efficient deployment and inference strategies where possible.

Model Explainability and Trust

Understanding why an MFM makes a particular decision is crucial, especially in regulated industries.

The “Black Box” Problem

Like many advanced AI models, MFMs can be opaque. It can be challenging to trace the exact reasoning path when they combine insights from multiple modalities. This lack of transparency can be a barrier to adoption in fields where accountability and explainability are paramount.

Ensuring Fairness and Mitigating Bias

If the training data for an MFM contains biases (e.g., underrepresentation of certain demographics in image datasets, or biased language in text), these biases will be amplified in the model’s outputs. Enterprises must implement rigorous methods to audit and mitigate bias in both their training data and the MFM’s behavior.

In the rapidly evolving landscape of data analysis, the emergence of multimodal foundation models is transforming how enterprises leverage their data. These models integrate various data types, enhancing the depth and accuracy of insights derived from complex datasets. For a broader perspective on the trends shaping the future of technology and data analysis, you might find it interesting to explore this article on predicted trends for 2023. You can read more about it here. This convergence of technologies is paving the way for more sophisticated analytical capabilities in the enterprise sector.

Getting Started with Multimodal Foundation Models

| Model | Accuracy | Training Time | Inference Time |

|---|---|---|---|

| MMF-1 | 92% | 3 days | 10 ms |

| MMF-2 | 95% | 5 days | 15 ms |

| MMF-3 | 89% | 4 days | 12 ms |

So, you’re convinced MFMs have a place in your enterprise. How do you begin? It’s not about jumping in headfirst with the biggest, most expensive model.

Start Small and Prioritize Use Cases

Identify a few key business problems where multimodal insights would genuinely provide a breakthrough. Don’t try to solve everything at once.

Identify High-Impact Areas

Which areas of your business are currently struggling with siloed data analysis? Where do you have a rich mix of data types that aren’t currently being leveraged together? Customer service, product feedback, or particular operational workflows are good starting points.

Pilot Projects and MVPs

Begin with a manageable pilot project. Focus on demonstrating a clear return on investment (ROI) or significant operational improvement with a minimum viable product (MVP). This allows you to learn, iterate, and build internal expertise without massive upfront commitments.

Leverage Existing Cloud Services and Partnerships

You don’t need to build MFMs from scratch. Many cloud providers and AI companies offer pre-trained MFMs and specialized services.

Utilizing APIs and Managed Services

Platforms like Google Cloud’s Vertex AI, AWS’s Bedrock, or Azure OpenAI Service provide access to powerful foundation models via APIs. This allows enterprises to experiment and integrate MFMs into their applications without having to manage the underlying infrastructure or model training.

Partner with AI Experts

If internal expertise is limited, consider partnering with AI consulting firms or solution providers who specialize in MFM integration and fine-tuning for enterprise use cases.

Focus on Data Governance and Quality

The success of any MFM implementation hinges on the quality and accessibility of your data.

Establish Robust Data Pipelines

Invest in data engineering to create pipelines that can ingest, clean, transform, and integrate multimodal data from various sources into a unified structure suitable for MFM consumption.

Implement Data Governance Policies

Ensure clear policies for data ownership, access, security, and privacy are in place. This is crucial for maintaining compliance and trust, especially when dealing with sensitive customer or operational data.

By approaching multimodal foundation models strategically and with an understanding of both their immense potential and practical challenges, enterprises can truly unlock new levels of insight and drive innovation in their data analysis. It’s not just about more data; it’s about smarter, more connected understanding.

FAQs

What are multimodal foundation models for enterprise data analysis?

Multimodal foundation models for enterprise data analysis are advanced machine learning models that can process and analyze data from multiple sources and in various formats, such as text, images, and numerical data. These models are designed to provide a comprehensive understanding of complex enterprise data and enable more accurate and insightful analysis.

How do multimodal foundation models benefit enterprise data analysis?

Multimodal foundation models offer several benefits for enterprise data analysis, including the ability to process and analyze diverse types of data, improved accuracy and reliability of analysis results, and the ability to uncover insights and patterns that may not be apparent through traditional analysis methods. These models also have the potential to streamline and automate data analysis processes, saving time and resources for enterprises.

What are some examples of multimodal foundation models for enterprise data analysis?

Examples of multimodal foundation models for enterprise data analysis include transformer-based models like BERT (Bidirectional Encoder Representations from Transformers), GPT (Generative Pre-trained Transformer), and T5 (Text-to-Text Transfer Transformer). These models have been adapted and extended to handle multimodal data, enabling them to process and analyze text, images, and other types of data simultaneously.

How are multimodal foundation models trained for enterprise data analysis?

Multimodal foundation models are typically trained using large and diverse datasets that contain a mix of different types of data, such as text, images, and numerical data. The training process involves optimizing the model’s parameters to learn representations of the various data modalities and their relationships, enabling the model to effectively process and analyze multimodal data in enterprise settings.

What are the potential challenges of using multimodal foundation models for enterprise data analysis?

Challenges of using multimodal foundation models for enterprise data analysis include the need for large and diverse training datasets, the complexity of integrating and processing multimodal data, and the potential for biases and limitations in the model’s representations of different data modalities. Additionally, deploying and maintaining multimodal foundation models in enterprise environments may require specialized expertise and resources.