Let’s talk about synthetic data in medical research. Simply put, synthetic data is artificially generated data that mimics the statistical characteristics of real-world data without revealing any actual individual patient information. It’s becoming a really big deal because it offers a way to overcome some major roadblocks in medical studies, especially around data privacy and access. Instead of using sensitive patient records directly, researchers can work with these fabricated datasets that behave in a similar way, allowing them to develop and test hypotheses, train AI models, and explore new avenues without the usual privacy headaches. This isn’t just a clever trick; it’s a practical solution to a pervasive problem.

The medical field is overflowing with incredibly valuable data, but it’s also arguably the most sensitive. Patient privacy is paramount, and rightly so. This creates a huge challenge: how do you leverage this goldmine of information for incredible breakthroughs without compromising individual confidentiality? That’s where synthetic data steps in as a game-changer.

Overcoming Privacy Barriers

This is probably the biggest advantage.

Real medical data is governed by strict regulations like HIPAA in the US or GDPR in Europe.

Gaining access to large, diverse datasets for research is a bureaucratic nightmare, often taking months or even years, and even then, the data might be heavily de-identified, limiting its utility.

- Faster Access: Synthetic data can be shared much more freely, significantly speeding up research timelines. No lengthy ethics committee approvals for patient data (though the synthetic data generation process itself needs ethical oversight).

- Reduced Risk: If synthetic data is generated correctly, it shouldn’t be possible to re-identify individuals, massively reducing the risk of privacy breaches.

- Broader Collaboration: Researchers from different institutions, even across international borders, can collaborate on projects using synthetic datasets without the complex data sharing agreements typically required for real patient data.

Addressing Data Scarcity and Imbalance

Sometimes, you just don’t have enough data, especially for rare diseases or specific patient subgroups. Or, your data might be heavily biased towards certain demographics or common conditions.

- Filling Data Gaps: Synthetic data generators can be used to create more instances of rare conditions, effectively “balancing” a dataset for machine learning training. This is particularly valuable for developing diagnostic tools for diseases that don’t have many recorded cases.

- Augmenting Small Datasets: For studies with limited cohort sizes, synthetic data can expand the dataset, allowing more robust statistical analyses and the development of more generalizable models.

- Simulating “What If” Scenarios: Researchers can generate synthetic data that represents hypothetical patient populations or treatment outcomes, enabling them to explore scenarios that haven’t occurred in real life or are ethically impossible to test directly.

Fostering Innovation and Development

Synthetic data isn’t just about overcoming limitations; it’s about enabling new possibilities.

- Algorithm Development & Testing: AI algorithms, especially deep learning models, thrive on large, diverse datasets. Synthetic data provides an endless supply for training, testing, and fine-tuning these models without touching real patient records until the very final stages.

- Software Prototyping: Developers can build and test new medical software, electronic health record (EHR) systems, or analytics dashboards using realistic synthetic data before integrating them with sensitive live systems. This dramatically reduces the risk of errors and speeds up development cycles.

- Educational Tools: Medical students and junior researchers can practice data analysis, statistical modeling, and even clinical reasoning using realistic synthetic patient cases without any ethical concerns.

Synthetic data generation techniques are becoming increasingly important in medical research, particularly as they allow for the creation of diverse datasets that can enhance the training of machine learning models while preserving patient privacy. A related article that explores the intersection of technology and health is available at How Smartwatches Are Enhancing Connectivity, which discusses how wearable devices are revolutionizing patient monitoring and data collection in real-time, ultimately contributing to more informed medical research and decision-making.

Key Takeaways

- Clear communication is essential for effective teamwork

- Active listening is crucial for understanding team members’ perspectives

- Setting clear goals and expectations helps to keep the team focused

- Regular feedback and open communication foster a positive team environment

- Celebrating achievements and milestones boosts team morale and motivation

How Synthetic Data is Made: Under the Hood

Generating synthetic data isn’t just about random numbers; it’s a sophisticated process that aims to capture the statistical essence of the original data. There are several approaches, each with its strengths and weaknesses.

Rule-Based and Statistical Models

These are some of the simpler, more traditional methods. They rely on pre-defined rules or statistical distributions observed in the real data.

- Attribute-by-Attribute Generation: This method involves looking at the statistical properties (like mean, standard deviation, correlation) of each individual feature in the original dataset and then generating new data points for each feature independently or based on simple relationships. For example, if age in your real data is normally distributed with a certain mean and standard deviation, you generate new ages following that distribution.

- Probabilistic Graphical Models (e.g., Bayesian Networks): These models learn the probabilistic relationships between different variables in the real data. Once these relationships are mapped out, new synthetic data can be sampled from the learned network. This allows for more complex inter-dependencies between features than simple attribute-by-attribute generation.

- Decision Tree-Based Methods: Algorithms like

CART(Classification and Regression Trees) orCTGAN(Conditional Tabular GAN, which builds on decision trees) learn to model the data distribution by creating a series of rules. They can then generate new synthetic data that follows these learned rules.

The upside of these methods is their interpretability and sometimes faster generation. The downside is that they might struggle to capture very complex, non-linear relationships present in high-dimensional medical datasets, potentially leading to synthetic data that isn’t quite as realistic.

Machine Learning-Based Approaches

This is where things get really powerful, especially with the rise of deep learning. These methods are designed to learn complex, often non-linear patterns within the data.

- Generative Adversarial Networks (GANs): GANs are a popular deep learning approach. They consist of two neural networks: a generator and a discriminator.

- The generator creates synthetic data.

- The discriminator tries to distinguish between real data and the synthetic data generated by the generator.

- They play a “game”: the generator tries to create data that can fool the discriminator, and the discriminator gets better at spotting fakes. This adversarial process continues until the generator can create synthetic data that is virtually indistinguishable from real data in terms of its statistical properties.

- Advantages: GANs can be incredibly effective at capturing complex, high-dimensional distributions, leading to very realistic synthetic data. They’re particularly good for images, but also excel at tabular data (like patient records).

- Disadvantages: They can be computationally intensive to train and sensitive to hyperparameters. They can also suffer from “mode collapse,” where the generator only produces a limited variety of data samples.

- Variational Autoencoders (VAEs): VAEs are another type of generative neural network. They work by learning a compressed, latent representation (a “code”) of the input data and then learning to reconstruct the original data from this latent code.

- Encoder: Maps the real data into a lower-dimensional latent space.

- Decoder: Reconstructs data from samples taken from this latent space.

- Advantages: VAEs are generally more stable to train than GANs and can provide a smoother latent space, which can be useful for interpolation or generating variations of existing data.

- Disadvantages: The generated samples might sometimes appear “blurrier” or less sharp compared to GANs, especially for image data, though this is less of an issue for tabular medical records.

- Diffusion Models: These are a relatively newer but highly effective class of generative models that have shown incredible results in image generation. They work by gradually adding noise to data until it’s pure noise, and then learning to reverse this process, “denoising” random noise back into realistic data.

- Advantages: Diffusion models can generate extremely high-quality and diverse samples. They are less prone to mode collapse than GANs.

- Disadvantages: Computationally very expensive, especially for sampling new data. Still an active area of research for tabular data generation.

Challenges and Considerations

While synthetic data offers immense potential, it’s not a magic bullet. There are important challenges and ethical considerations to keep in mind.

Data Fidelity and Utility

The whole point of synthetic data is that it should be “good enough” to substitute real data. But how do you define “good enough”?

- Statistical Similarity: Does the synthetic data preserve the same mean, median, variances, distributions, and correlations as the original data?

This is crucial for maintaining the validity of statistical analyses.

- Preservation of Insights: Can a model trained on synthetic data perform equally well when tested on real data? Does it uncover the same relationships or patterns that would be found in the original data? This is about the utility of the synthetic data.

- Rare Event Representation: It can be challenging for synthetic data generators to accurately reproduce rare events or outliers, which can sometimes be the most clinically significant findings.

If the generator doesn’t “see” enough examples of a rare condition, it might struggle to create realistic synthetic versions.

Privacy Guarantees and Re-identification Risk

The primary driver for synthetic data is privacy, so ensuring it’s genuinely private is non-negotiable.

- Formal Privacy Measures (e.g., Differential Privacy): Some synthetic data generation methods incorporate differential privacy, an algorithmic technique that adds a controlled amount of noise during the generation process to mathematically guarantee that no single individual’s data can be inferred, even if an attacker has access to all other data points. This is the strongest form of privacy guarantee.

- Attack Vectors: Researchers need to be aware of potential re-identification attacks. Even if synthetic data doesn’t contain direct identifiers, an attacker might be able to combine publicly available information with synthetic data attributes to infer real individuals.

Robust evaluation is key here.

- Ethical Oversight of Generation: The process of creating synthetic data from real patient data still requires careful ethical review. Who owns the synthetic data? What are acceptable uses?

How are bias and fairness handled in the generative models?

Model Complexity and Explainability

Advanced machine learning models, while powerful, can be complex to manage and understand.

- Black Box Nature: Deep learning models, including GANs and VAEs, are often “black boxes.” It can be hard to fully understand how they generate synthetic data or why they might fail to capture certain nuances.

- Bias Amplification: If the original real data contains biases (e.g., underrepresentation of certain ethnic groups, or biases in diagnosis patterns), the generative model can learn and even amplify these biases in the synthetic data, perpetuating existing inequalities. Careful auditing of both input and output data is essential.

- Computational Resources: Training sophisticated generative models, especially GANs and Diffusion models, requires significant computational power (GPUs, cloud resources) and expertise in machine learning. This can be a barrier for smaller research groups.

Current Applications in Medical Research

Synthetic data is already making waves across various medical domains. Its versatility means it can be tailored for many specific research questions.

Training and Validating AI Models

This is perhaps the most immediate and impactful application.

- Diagnostic Tools: Synthetic images (e.g., X-rays, MRIs, retinal scans) can be generated to train AI models for detecting diseases like pneumonia, diabetic retinopathy, or cancerous lesions, allowing for more diverse and larger training datasets than real patient images could provide due to privacy constraints.

- Prognostic Models: Models that predict disease progression, treatment response, or patient outcomes can be trained on synthetic electronic health record (EHR) data. This can help identify risk factors or personalize treatment plans without exposing individual patient histories.

- Drug Discovery: Synthetic molecular structures or simulated patient responses can accelerate the drug discovery process by providing more experimental data for AI-driven drug design and efficacy prediction.

Privacy-Preserving Data Sharing

Bridging the gap between data silos is a critical problem in collaborative medical research.

- Multi-Center Studies: Institutions can generate synthetic versions of their patient cohorts, aggregate these synthetic datasets, and conduct combined analyses without ever sharing real patient data. This speeds up research and allows for larger, more statistically powerful studies.

- Public Data Release: Research institutions or government health agencies can release high-quality synthetic datasets to the general public or to commercial entities for research development, significantly broadening the impact of their data while fully protecting patient privacy.

- Benchmarking Datasets: Synthetic data can be used to create standardized benchmarking datasets for evaluating new algorithms or methodologies in a privacy-safe manner.

Augmenting Clinical Trials and Rare Disease Research

Getting enough patient data is a constant struggle, especially for less common conditions.

- Rare Disease Cohorts: For diseases with very few patients, synthetic data can be generated to expand the effective sample size, making it feasible to train robust predictive models or perform meaningful statistical analyses that would otherwise be impossible.

- Clinical Trial Design Simulation: Synthetic patient populations can be generated based on existing trial data to simulate different trial designs, patient recruitment strategies, or treatment arms. This can optimize trial protocols before enrolling a single real patient, potentially saving huge costs and time.

- Placebo vs. Treatment Arm Generation: In certain scenarios, synthetic data might be used to simulate a control group or extrapolate long-term outcomes based on short-term trial data, allowing researchers to explore scenarios for which real data might be scarce or unethical to collect.

In the realm of medical research, the use of synthetic data generation techniques has gained significant attention for its potential to enhance data privacy and improve the robustness of studies. A related article discusses the importance of selecting the right tools for various applications, which can be particularly relevant when considering the implications of data handling in sensitive fields. For more insights on making informed choices in technology, you can read the article on smartphone selection here. This connection highlights the broader theme of responsible data management across different domains.

The Future of Synthetic Data in Medicine

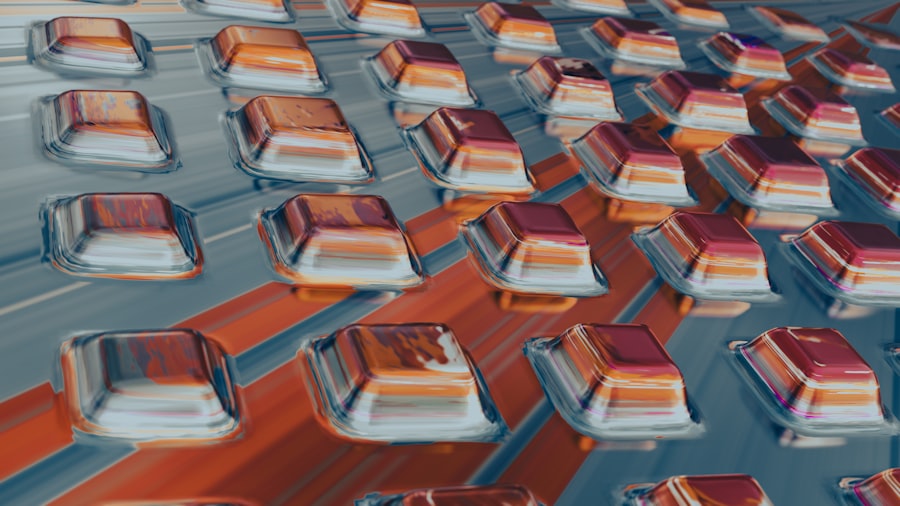

| Technique | Advantages | Disadvantages |

|---|---|---|

| Data Augmentation | Increases dataset size, reduces overfitting | May not capture true variability |

| GANs | Can generate realistic data | Complex to train, mode collapse |

| VAEs | Generates diverse data | Blurry outputs, posterior collapse |

| SMOTE | Addresses class imbalance | May introduce noise, not suitable for all data types |

This field is evolving rapidly, and we’re just scratching the surface of its potential.

Integration with Federated Learning

Federated learning allows multiple institutions to train a shared AI model without sharing their raw data. Coupling this with synthetic data could create a powerful synergy.

- Enhanced Data for Federated Learning: Institutions could initially generate synthetic data from their real data, use this synthetic data for local model pre-training or data augmentation, and then contribute to a federated learning framework with an even stronger local model, further enhancing privacy and model robustness.

- Synthetic Data as an Intermediary: Synthetic data could serve as a secure “data proxy” in a federated learning setup, where institutions train a local model on their real data, then generate synthetic data from the model’s outputs rather than the raw data itself, and share this synthetic summary with a central server. This could add another layer of privacy protection.

Beyond Tabular and Imaging Data

While much of the current focus is on these data types, expect expansion.

- Genomic and Proteomic Data: Generating realistic synthetic genomic sequences or proteomic profiles could unlock new avenues in personalized medicine and drug development, allowing researchers to explore disease mechanisms at a molecular level without compromising individual genetic privacy.

- Time-Series and Wearable Data: Data from wearable devices (heart rate, activity levels), continuous glucose monitors, or long-term EHR entries represents time-series data. Generating realistic synthetic versions of these complex temporal patterns could revolutionize predictive health models and remote patient monitoring.

- Electronic Health Record (EHR) Integration: Fully integrated synthetic EHR systems that mimic the complexity and interconnectedness of real-world clinical data will pave the way for testing new clinical decision support systems and optimizing hospital workflows in a safe, sandboxed environment.

Standardized Evaluation Frameworks

As synthetic data becomes more prevalent, robust methods for evaluating its quality and privacy guarantees will become critical.

- Metrics for Fidelity: Developing universal metrics to quantify the statistical similarity, predictive utility, and clinical relevance of synthetic data compared to real data.

- Privacy Auditing Tools: Creating tools and methodologies to rigorously test synthetic datasets for potential re-identification risks and ensure formal privacy guarantees (like differential privacy) are met.

- Ethical Guidelines: Establishing clear, internationally recognized ethical guidelines for the generation, use, and dissemination of synthetic medical data, ensuring fairness, transparency, and accountability across the research ecosystem.

The journey with synthetic data in medicine is still relatively young, but its trajectory suggests it will fundamentally change how we conduct research, share insights, and ultimately, improve patient care in a privacy-conscious world. It’s a testament to how practical solutions can emerge from complex challenges.

FAQs

What is synthetic data generation in medical research?

Synthetic data generation in medical research involves creating artificial data that mimics real patient data. This can be done using various techniques to generate data that closely resembles the characteristics of real patient data.

Why is synthetic data generation important in medical research?

Synthetic data generation is important in medical research because it allows researchers to access and analyze data without compromising patient privacy. It also enables researchers to create larger and more diverse datasets for analysis and model development.

What are some common techniques used for synthetic data generation in medical research?

Common techniques for synthetic data generation in medical research include generative adversarial networks (GANs), differential privacy, and data augmentation. These techniques can be used to create synthetic data that closely resembles real patient data while protecting patient privacy.

What are the potential benefits of using synthetic data in medical research?

Using synthetic data in medical research can help researchers overcome data access and privacy challenges. It can also enable the development of more robust and generalizable models, as well as facilitate collaboration and data sharing among researchers.

What are the limitations and challenges of synthetic data generation in medical research?

Some limitations and challenges of synthetic data generation in medical research include the need to ensure that the synthetic data accurately represents real patient data, as well as the potential for bias and inaccuracies in the generated data. Additionally, there may be regulatory and ethical considerations when using synthetic data in medical research.