So, you want your digital you to hop between different virtual worlds, huh? That’s the dream, right? Imagine walking from your favorite game into a social VR space, or attending a virtual meeting, all without having to rebuild your avatar from scratch. Developing interoperable avatars across platforms is a big goal, and while we’re not quite there yet as easily as changing clothes, there are some really interesting things happening. It boils down to creating digital identities that aren’t tied to a single walled garden, but can travel and represent you wherever you go online.

Think of it like this: right now, your avatar in one game is like a character custom-built for that specific game. It has certain features, animations, and a visual style that only make sense within its home environment. It doesn’t automatically understand or fit into another.

Interoperability, in this context, means building avatars that are designed with a common set of standards and data structures. This allows them to be recognized, rendered, and animated across different applications, games, and virtual worlds. It’s about creating a universal digital “you” that can be imported, adapted, and used in various experiences.

Why Is This Even a Thing?

The current state of digital identity is pretty fragmented. Each platform essentially owns your avatar within its ecosystem. If you want to be represented in a new space, you generally have to start over. This is a huge barrier for users who invest time and creativity into their digital personas and for platforms trying to build a cohesive digital universe.

The Core Challenge: Standardization

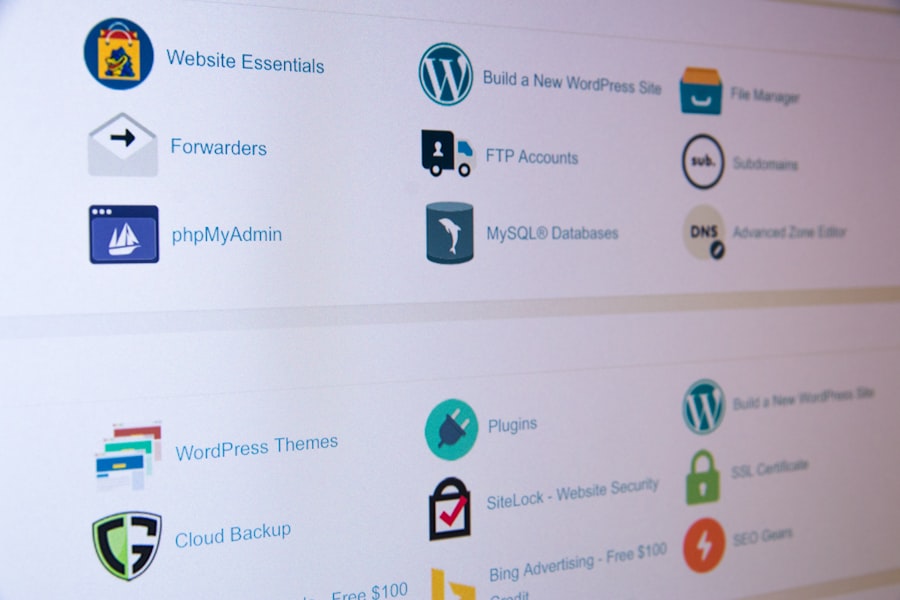

The biggest hurdle is the lack of universal standards. Different platforms use different technologies, data formats, and rendering engines. Getting an avatar designed for Unity to seamlessly display and animate in Unreal Engine, or a blockchain-based metaverse platform, requires significant effort and, ideally, common ground.

In the rapidly evolving digital landscape, the development of interoperable avatars across platforms is becoming increasingly significant. This concept not only enhances user experience but also aligns with broader trends in digital marketing. For a deeper understanding of how these trends are shaping the future of online interactions, you can explore the article on the top trends in digital marketing for 2023 at this link.

Key Takeaways

- Clear communication is essential for effective teamwork

- Active listening is crucial for understanding team members’ perspectives

- Setting clear goals and expectations helps to keep the team focused

- Encouraging open and honest feedback fosters a culture of continuous improvement

- Celebrating successes and milestones boosts team morale and motivation

The Technical Hurdles: How to Actually Do It

This is where things get a bit more technical, but it’s also where the innovation is happening. Making avatars portable involves several key areas of development.

3D Model Formats and Data

The foundation of any avatar is its 3D model. But not all 3D models are created equal for this kind of portability.

Universal File Formats

Right now, formats like FBX and glTF are gaining traction. glTF (GL Transmission Format) is often highlighted as a modern, efficient format for transmitting 3D scenes and models, including meshes, materials, textures, and animations. It’s designed to be extensible and performant, making it a good candidate for cross-platform avatar data.

- Advantages of glTF: It’s a royalty-free, open standard, which is crucial for broad adoption. It can embed a lot of information within a single file, making it easier to transfer complex avatars.

- Challenges with existing models: Many existing avatars are locked into proprietary formats or are not optimized for interoperability. Converting and adapting them can be a complex process.

Rigging and Skeletal Structures

An avatar isn’t just a static model; it’s a dynamic entity. The way it’s “rigged” – meaning the digital skeleton and controls that allow it to be animated – is crucial. For interoperability, this rigging needs to be comparable across platforms.

- Standardized Skeleton: The ideal scenario is a common skeletal structure or a mapping process that translates one platform’s skeleton to another’s. This ensures that animations applied to one avatar can be reinterpreted correctly on another.

- Bone Naming Conventions: Even with a similar skeleton, variations in bone naming can cause issues. Platforms need to agree on consistent naming for key body parts (e.g., “left_upper_arm,” “right_hip”).

Animation Systems and Retargeting

Once you have a standardized model and rig, you need to consider how animations will work.

Animation Retargeting

This is the process of taking an animation created for one skeleton and applying it to a different, but similar, skeleton. It’s like taking a dance move designed for one dancer and having another performer replicate it, adjusting their own proportions.

- The Process: Retargeting involves mapping the source skeleton’s joints to the target skeleton’s joints and then recalculating the animation curves.

- Accuracy Issues: The success of retargeting depends heavily on the similarity of the skeletons and the quality of the mapping. Significant differences can lead to awkward or unnatural movements.

- Machine Learning: AI and machine learning are increasingly being used to improve animation retargeting, allowing for more nuanced and realistic translations of motion.

Expressive Animations

Beyond basic locomotion, avatars need to convey emotion and personality through facial expressions and gestures.

- Facial Rigging Standards: Similar to body rigging, standardizing facial rigs and the blendshapes (morph targets that create facial expressions) used is essential.

- Lip Sync: A common challenge is ensuring accurate lip synchronization with audio across different platforms. This often requires dedicated lip-sync algorithms.

Materials, Textures, and Appearance

What your avatar looks like is a big part of its identity. Making that consistent across platforms is tricky.

Physically Based Rendering (PBR)

Many modern engines use PBR workflows to create realistic materials. This approach uses maps (like albedo, normal, roughness, metallic) to define how light interacts with a surface.

- Standardizing Material Properties: While PBR is a step towards consistency, different engines might interpret these maps slightly differently. Agreements on how to define and apply these material properties are needed.

- Texture Formats: Using common texture formats (like PNG, JPG, or DDS) and ensuring they are applied correctly by different renderers is key.

Customization and Appearance Layers

Users love to customize their avatars. This adds another layer of complexity.

- Modular Avatar Design: Building avatars from modular components (e.g., interchangeable hair, clothing, accessories) that can be easily swapped and combined is a promising approach for customization.

- Material Swapping and Tinting: Allowing for simple color changes or swapping of material types for clothing items can maintain a level of customization without requiring entirely new assets.

Building Blocks for Interoperability: Key Standards and Technologies

So, what are the actual tools and concepts developers are using or discussing to achieve this?

glTF and Beyond: The File Format Frontrunners

As mentioned, glTF is a strong contender for a universal avatar file format. Its comprehensive nature and open standard make it ideal.

The Power of .glb

A common implementation of glTF is the .glb file, which essentially bundles the model, textures, and other assets into a single binary file.

This makes it incredibly convenient for transfer and loading.

- Efficiency:

.glbfiles are often optimized for efficient loading and rendering, which is vital for real-time applications. - Embeddability: It can be easily embedded into other data structures or sent over networks.

VRM: A Standard for Anime-Style Avatars

VRM is a file format specifically designed for 3D humanoid avatars, particularly popular in the anime/VTuber community. It builds upon glTF.

- Key Features of VRM: It includes standardized metadata, blendshape expressions, and rigid bone structures making it easier to use with VR applications and animation tools.

- Interoperability within VRM Ecosystems: While not universally interoperable with all platforms, VRM provides strong interoperability between applications that specifically support the VRM standard.

- Example Use Cases: Live streaming, VR chat, and virtual meetings are common areas where VRM is adopted.

OpenXR: Standardizing VR/AR Input

While OpenXR doesn’t directly deal with avatar models, it’s crucial for how avatars interact with the virtual world and how user input (like hand movements) is translated.

- Universal Input Handling: OpenXR provides a common API for accessing XR hardware (headsets, controllers).

This means hand tracking or controller input can be standardized, allowing avatar hand animations to be driven consistently across different OpenXR-compliant platforms.

- Indirect Avatar Interoperability: By standardizing how users interact, OpenXR indirectly contributes to avatar interoperability by ensuring that a user’s intended actions are interpreted similarly, leading to more consistent avatar behavior.

Decentralized Identity (DID) and Verifiable Credentials (VCs)

This is a more forward-thinking approach, focusing on the ownership and verification of avatar data rather than just its visual representation.

- User-Controlled Identity: DID technology allows users to have self-sovereign identities that are not tied to any single platform.

- Verifiable Credentials: VCs could be used to store and verify aspects of your avatar’s ownership or attributes, allowing you to “prove” you own a specific avatar or have certain unlocked customization options, which could then be recognized by different platforms.

- Blockchain Integration: Many DID solutions leverage blockchain technology for security and decentralization.

The Path Forward: Ecosystems and Collaboration

Interoperability isn’t just about technical specs; it’s also about people and organizations agreeing to work together.

Cross-Platform Initiatives and Consortia

Several groups and companies are working towards creating common ground for digital assets, including avatars.

- Industry Alliances: Organizations like the Metaverse Standards Forum are actively discussing and developing standards for interoperability across various digital platforms, with avatars being a core component.

- Open Source Projects: Collaborative open-source projects allow developers to contribute to building common tools and libraries that facilitate avatar interoperability.

Platform Cooperation and API Development

For avatars to truly move between worlds, platforms need to cooperate.

- Open APIs for Avatar Import/Export: Platforms could develop APIs that allow users to import or export their avatars in standardized formats.

- Partnerships: Companies could form partnerships to enable direct avatar compatibility between their services, creating curated interoperable experiences.

User-Driven Demand and Network Effects

Ultimately, user demand will push the industry forward.

- The “Walled Garden” Problem: Users are increasingly frustrated with being locked into specific platforms. The desire for seamless digital identity will drive the adoption of interoperable solutions.

- Network Effects: When more users and platforms adopt interoperable avatar standards, the value of those standards increases, creating a positive feedback loop.

In the quest for creating interoperable avatars across various platforms, it is essential to consider the technological advancements that facilitate seamless user experiences. A related article discusses the innovative features of the Samsung Galaxy Chromebook 2, which showcases how modern devices can enhance digital interactions. By exploring the potential of such technology, we can better understand the tools necessary for developing avatars that function effortlessly across different environments. For more insights, you can read the article