The proliferation of deepfake technology presents a multifaceted challenge to corporate security frameworks. This technology, which utilizes artificial intelligence and machine learning to generate synthetic media, is becoming increasingly sophisticated and accessible. Its potential for manipulation across various corporate functions necessitates a comprehensive understanding of its capabilities and implications.

Deepfake technology, at its core, leverages generative adversarial networks (GANs) or autoencoders to manipulate or create realistic synthetic media. This can involve altering existing video or audio, or generating entirely new content.

Generative Adversarial Networks (GANs)

GANs operate through a two-player game: a generator and a discriminator. The generator creates synthetic data, while the discriminator attempts to distinguish between real and fake data. Through this adversarial process, the generator improves its ability to create increasingly convincing fakes, while the discriminator simultaneously enhances its detection capabilities. This dynamic interplay drives the evolution of deepfake quality.

Autoencoders for Facial Swaps

Autoencoders are neural networks trained to encode data into a low-dimensional representation and then decode it back to its original form. In the context of deepfakes, autoencoders are particularly effective for facial swaps. A model is trained on images of two different individuals, learning to encode one person’s face and then decode it using the features of the other. This allows for the seamless superimposition of one person’s face onto another’s body in video footage.

Voice Synthesis and Mimicry

Beyond visual manipulation, deepfake technology extends to audio. Voice synthesis systems can generate speech in the voice of a target individual, often from a relatively small audio sample. This involves analyzing the unique vocal characteristics – pitch, cadence, accent, and intonation – and then replicating them to produce new, convincing spoken content. The potential for these audio deepfakes to mimic key personnel is significant.

In exploring the implications of deepfake technology on corporate security, it is essential to consider the broader landscape of technology and its impact on various sectors. For instance, an interesting article titled “The Best Smartwatch Apps of 2023” discusses how advancements in wearable technology can enhance security measures within corporate environments. By integrating smartwatches with security protocols, companies can better monitor employee activities and safeguard sensitive information. To read more about these innovative applications, visit The Best Smartwatch Apps of 2023.

Vectors of Attack and Potential Threats

The versatility of deepfake technology makes it a potent weapon for malicious actors seeking to undermine corporate security. Its applications extend across various attack vectors, each posing unique challenges.

Financial Fraud and Extortion

Deepfakes can be instrumental in sophisticated financial fraud schemes. Imagine a scenario where a deepfake audio of a CEO authorizes a substantial wire transfer to an unknown account. The authenticity of the voice, coupled with a fabricated sense of urgency, could bypass standard verification protocols. This is not merely a hypothetical; instances of voice deepfakes being used in real-world financial scams have been reported. Similarly, deepfake videos or audio could be used in extortion attempts, creating fabricated scenarios to damage reputation or coerce individuals into making payments. The “human element” in these scams, even when technologically generated, remains a significant vulnerability.

Espionage and Intellectual Property Theft

The ability to convincingly impersonate individuals opens doors for corporate espionage. A deepfake of a high-ranking employee could be used to gain unauthorized access to sensitive information or influence key decisions. For example, a deepfake video of a researcher “leaking” proprietary information could be used to discredit them or pressure them into revealing actual secrets. Furthermore, deepfakes could be employed in “social engineering” attacks, bypassing physical security measures or digital firewalls by exploiting trust and perceived authority. Consider a deepfake video of a familiar IT technician requesting system access or login credentials.

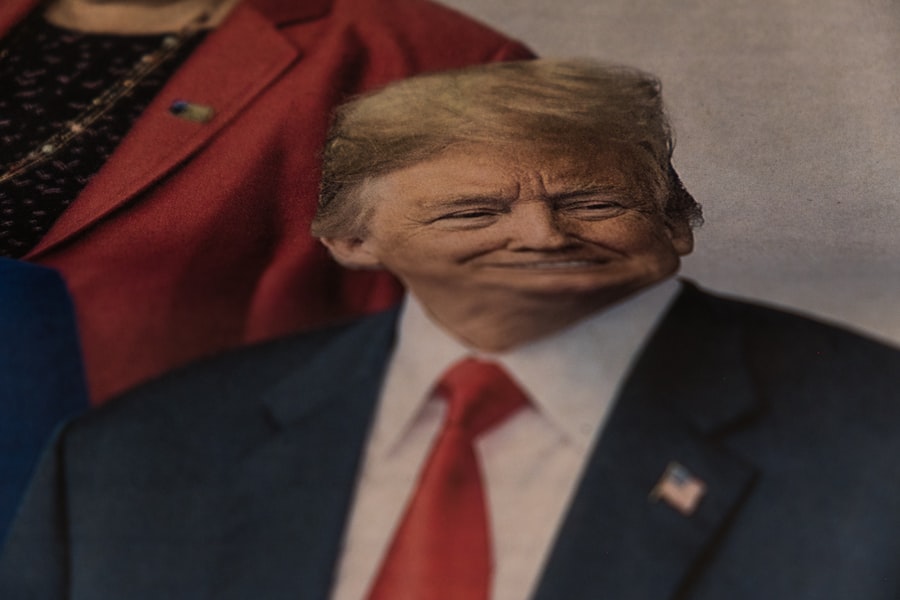

Reputation Damage and Disinformation Campaigns

Deepfakes are a powerful tool for reputation sabotage. Fabricated videos or audio clips depicting executives or employees engaging in unethical, illegal, or socially unacceptable behavior can quickly go viral, causing irreversible damage to a company’s brand image and market value. These disinformation campaigns can be orchestrated to manipulate public perception, discredit competitors, or influence stock prices. The speed and scale at which digital information spreads amplify the impact of such attacks, making rapid and effective countermeasures crucial. The challenge lies not only in demonstrating the falsity of the content but also in combating the initial impact of its virality.

Insider Threats and Internal Sabotage

While often associated with external actors, deepfake technology can also exacerbate insider threats. A disgruntled employee or a malicious actor within the organization could leverage deepfakes to frame colleagues, manipulate internal processes, or sow discord. Imagine a deepfake audio of a manager giving conflicting orders, creating confusion and inefficiency. The internal nature of such attacks often makes them more difficult to detect initially, as they can exploit existing trust networks and internal communication channels. The “trojan horse” of a trusted voice or face can be particularly effective within an established corporate hierarchy.

Detection and Mitigation Strategies

Addressing the threat of deepfakes requires a multi-layered approach, encompassing technological solutions, organizational policies, and employee education. No single solution offers a complete defense, highlighting the need for a comprehensive strategy.

Technological Countermeasures

The arms race between deepfake generation and detection is ongoing. Researchers are continually developing new methods to identify synthetic media.

Deepfake Detection Software

Specialized software utilizes AI and machine learning to analyze various artifacts indicative of deepfakes. These can include inconsistencies in facial movements, unnatural blinking patterns, discrepancies in lighting or shadows, and anomalies in audio waveforms. While not foolproof, these tools are becoming increasingly sophisticated, offering a first line of defense. However, the constant evolution of deepfake generation means detection software requires continuous updates and refinement. It’s a continuous game of cat and mouse, where new detection methods are often followed by new obfuscation techniques.

Digital Watermarking and Blockchain for Authenticity

To establish the authenticity of legitimate media, digital watermarking and blockchain technology offer promising solutions. Digital watermarks can be embedded into original video and audio files, providing an invisible, tamper-proof identifier. Blockchain, with its immutable ledger, can record the origin and modification history of digital assets, allowing for verification of their unaltered state. While these technologies help prevent the creation of new deepfakes from existing authentic content, they do not inherently protect against the generation of entirely new deepfake content from scratch.

Corporate Policies and Protocols

Beyond technology, robust corporate policies and formalized protocols are essential to build resilience against deepfake threats. These establish clear guidelines for interaction and verification.

Enhanced Verification Procedures

Crucially, organizations must implement enhanced verification procedures for sensitive communications and transactions. This goes beyond simple email confirmation. For example, any high-value financial transaction initiated verbally should require a follow-up video call with specific security questions or a pre-arranged code word. For critical internal directives, multi-factor authentication for communication channels and mandatory in-person or live video verification can significantly reduce vulnerability. Consider this an “airlock” for critical decisions, requiring multiple layers of confirmation.

Incident Response Planning

Developing a clear and practiced incident response plan for deepfake attacks is paramount. This plan should outline steps for identifying a deepfake, containing its spread, communicating with stakeholders, and initiating legal action if necessary. A well-rehearsed plan minimizes panic and ensures a swift and coordinated response, mitigating potential damage. The speed of response is critical, as deeply ingrained perceptions are difficult to change once formed.

Employee Education and Awareness

The human element remains a critical vulnerability. Educating employees about the nature of deepfakes and how to identify them is a foundational step in corporate security.

Training on Deepfake Recognition

Regular training sessions should be conducted to familiarize employees with common deepfake characteristics and warning signs. This includes visual cues like choppy movements, inconsistent lighting, and unnatural skin tones, as well as audio cues like robotic voices or unusual speech patterns. Employees should be empowered to question the authenticity of suspicious communications, even if they appear to originate from trusted sources. Empowering employees to be critical observers transforms them from potential victims into an additional line of defense.

Fostering a Culture of Skepticism

Cultivating a culture of healthy skepticism within the organization is vital. Employees should be encouraged to verify information, especially when it comes from unexpected sources, carries a sense of urgency, or seems out of character for the alleged sender. This psychological defense mechanism, when ingrained in corporate culture, can act as a significant barrier against deepfake manipulation. This isn’t about fostering mistrust, but rather promoting a diligent and critical approach to all forms of communication.

Legal and Ethical Considerations

The emergence of deepfake technology raises complex legal and ethical questions that extend beyond the immediate corporate security impact. These broader implications require careful consideration and potentially new legislative frameworks.

Data Privacy and Consent

The creation of deepfakes often involves the unauthorized use of an individual’s likeness or voice. This raises significant concerns regarding data privacy and consent. Obtaining, storing, and utilizing personal biometric data without explicit consent presents a clear violation of privacy rights, potentially leading to legal repercussions under regulations like GDPR or CCPA. Establishing clear guidelines for the ethical use of synthetic media tools, especially in research and development, is essential to avoid legal entanglement.

Defamation and Impersonation Laws

Current defamation and impersonation laws may not fully address the nuances of deepfake technology. While traditional laws cover the harm caused by false statements or unauthorized impersonation, the ease and realism of deepfakes complicate legal recourse. Proving malicious intent and attributing responsibility in the context of rapidly circulating synthetic media can be challenging. New legal frameworks may be necessary to specifically address the creation and dissemination of damaging deepfake content. The “plausible deniability” offered by deepfakes can be a significant hurdle for legal redress.

The Problem of “Truth Decay”

Perhaps the most insidious long-term impact of deepfakes is the erosion of trust in digital media, often termed “truth decay.” When discerning between real and fake becomes increasingly difficult, it undermines public confidence in news, official statements, and even personal interactions. This societal fragmentation can have profound consequences for businesses, making it harder to build and maintain trust with customers, investors, and the public. Companies must lead by example in promoting media literacy and investing in verifiable communication methods to combat this erosion of truth. The very foundations of corporate communication, built on trust and authenticity, are at stake.

The rise of deepfake technology has raised significant concerns regarding corporate security, as organizations must now navigate the complexities of misinformation and identity theft. A related article discusses essential considerations for students when selecting a PC, which can also be relevant for professionals looking to safeguard their digital environments. Understanding the right tools and technologies is crucial in combating threats posed by deepfakes. For more insights on choosing the best technology, you can read the article here.

Future Outlook and Continuous Adaptation

| Metric | Description | Impact on Corporate Security | Example/Statistic |

|---|---|---|---|

| Frequency of Deepfake Attacks | Number of reported deepfake incidents targeting corporations annually | Increased risk of fraud, misinformation, and unauthorized access | Over 200 cases reported globally in 2023 |

| Financial Losses | Estimated monetary losses due to deepfake-related security breaches | Significant financial damage from fraud and reputational harm | Estimated losses exceeding 50 million in 2023 |

| Employee Awareness Level | Percentage of employees trained to recognize deepfake threats | Higher awareness reduces susceptibility to social engineering attacks | Only 35% of employees trained in 2023 |

| Detection Accuracy | Effectiveness of AI tools in identifying deepfake content | Improved detection helps prevent security breaches | Current tools achieve up to 85% accuracy |

| Incident Response Time | Average time taken to respond to deepfake-related security incidents | Faster response limits damage and data loss | Average response time: 48 hours |

| Regulatory Compliance | Percentage of companies implementing policies against deepfake misuse | Compliance reduces legal risks and enforces security standards | Approximately 40% compliance rate in 2023 |

The landscape of deepfake technology is not static; it is an evolving challenge that demands continuous vigilance and adaptation. Predicting the exact trajectory is difficult, but understanding potential developments is key to proactive security.

Advancements in Deepfake Generation

We can anticipate continued advancements in the realism, speed, and accessibility of deepfake generation tools. The development of deepfakes that require minimal source data, generate in real-time, or even create entirely new, non-existent individuals will further complicate detection and attribution. The bar for creating convincing fakes will continue to lower, making the technology available to a broader range of actors. This democratisation of deepfake creation presents an ongoing and significant threat.

The Interplay with Other AI Technologies

Deepfakes will likely integrate with other emerging AI technologies. For instance, combining deepfakes with sophisticated AI chatbots could create highly believable and interactive synthetic agents capable of prolonged deception. The fusion of these technologies could lead to multimodal deepfakes that are even more difficult to distinguish from reality, presenting an even more complex challenge for corporate training and detection systems. Imagine a deepfake CEO engaging in a back-and-forth email exchange, followed by a convincing audio call.

The Imperative of Continuous Learning

For corporate security teams, the threat of deepfakes is an ongoing battle. Continuous learning and adaptation are not optional; they are essential. This involves staying abreast of the latest deepfake research, investing in cutting-edge detection technologies, regularly updating security protocols, and fostering a perpetual culture of critical evaluation among employees. The security posture must be fluid, capable of adjusting to new threats as they emerge. The “set it and forget it” approach is particularly dangerous in this rapidly evolving environment.

In conclusion, deepfake technology represents a formidable and dynamic threat to corporate security. Its potential for financial fraud, espionage, reputation damage, and internal sabotage necessitates a robust, multi-faceted defense strategy. By understanding the technology, implementing effective mitigation measures, educating employees, and adapting to future developments, corporations can build resilience against this evolving challenge and safeguard their assets, reputation, and foundational trust. The battle against deepfakes is not merely a technological one; it is a battle for the very veracity of information in the digital age.

FAQs

What is deepfake technology?

Deepfake technology uses artificial intelligence and machine learning to create realistic but fake audio, video, or images that can convincingly mimic real people.

How can deepfake technology affect corporate security?

Deepfakes can be used to impersonate executives or employees, manipulate communications, spread misinformation, and facilitate fraud or social engineering attacks, posing significant risks to corporate security.

What are common targets of deepfake attacks in corporations?

Common targets include company leadership, finance departments, and customer service teams, as attackers may attempt to authorize fraudulent transactions, leak sensitive information, or damage reputations.

How can companies protect themselves against deepfake threats?

Companies can implement employee training, use advanced detection tools, verify communications through multiple channels, and establish strict protocols for sensitive transactions to mitigate deepfake risks.

Are there legal regulations addressing the use of deepfake technology in corporate settings?

Some jurisdictions have introduced laws targeting malicious deepfake use, but regulations vary widely. Corporations should stay informed about relevant laws and incorporate compliance into their security strategies.