Thinking about how to make your high-performance computing (HPC) setup a bit more… compact and efficient? You’ve probably heard about liquid cooling, and it’s not just hype. Implementing liquid cooling systems can indeed significantly shrink the physical footprint of your HPC operations, potentially making them more environmentally friendly and easier to manage. It’s about smarter heat management, which in turn allows for denser configurations and less need for sprawling infrastructure.

The core issue with traditional air-cooled HPC is how much space those massive server racks and the ventilation systems around them take up. Air cooling relies on moving a lot of air, which means large fans, wide aisles for airflow, and often substantial power to keep all that air moving. Liquid cooling flips this.

Dissipating Heat More Effectively

The Science Behind Smaller Footprints

Liquid, particularly a specialized dielectric fluid, is far more efficient at absorbing and transferring heat than air. This means you don’t need as much flow or as many points of contact to shed the heat generated by your processors and other components. Think of it like trying to cool yourself down with a fan versus dipping yourself in a pool – the pool is going to have a more dramatic and immediate effect. This increased efficiency directly translates to the ability to pack more processing power into a smaller volume.

In the quest to enhance energy efficiency and reduce the environmental impact of high-performance computing (HPC) systems, implementing liquid cooling systems has emerged as a promising solution. A related article that explores the broader implications of technology in optimizing operational efficiency is available at Best Software for NDIS Providers: A Comprehensive Guide. This article delves into various software solutions that can streamline processes and improve resource management, paralleling the advancements in cooling technologies that aim to minimize the carbon footprint of HPC infrastructures.

Key Takeaways

- Clear communication is essential for effective teamwork

- Active listening is crucial for understanding team members’ perspectives

- Setting clear goals and expectations helps to keep the team focused

- Regular feedback and open communication can help address any issues early on

- Celebrating achievements and milestones can boost team morale and motivation

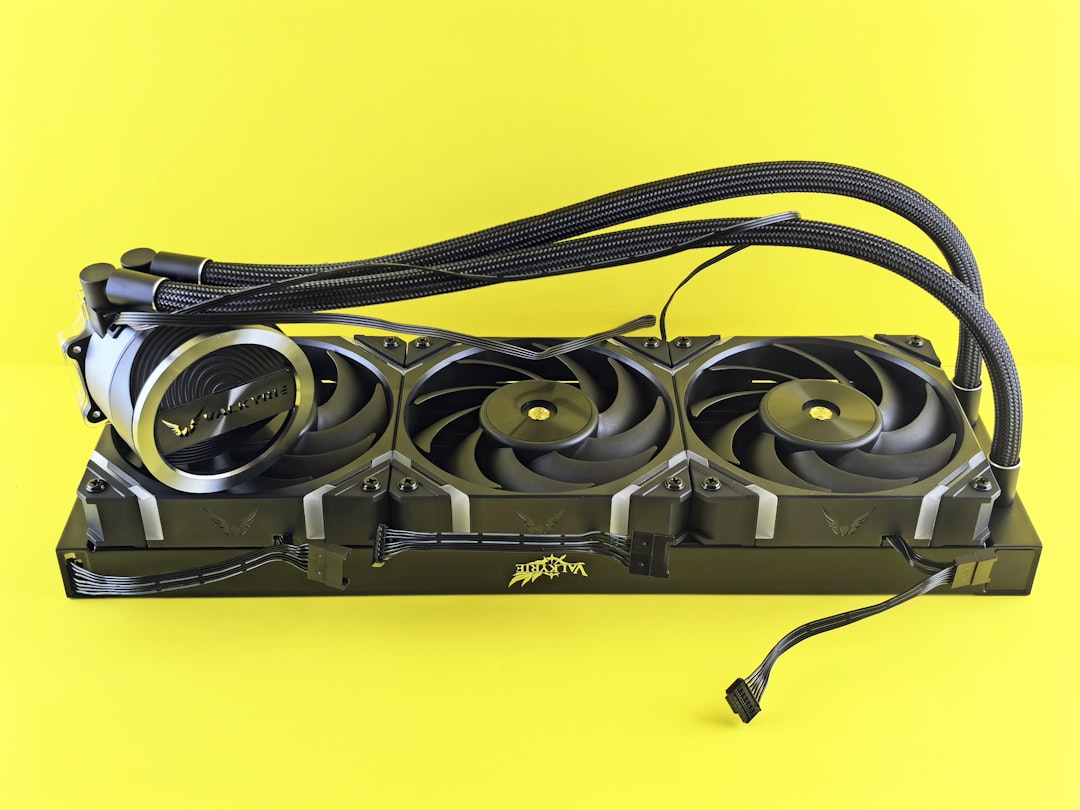

Direct-to-Chip Cooling: Getting Up Close and Personal with Heat

This is where things get really interesting in terms of physical space reduction. Instead of relying on air moving over a whole server chassis, direct-to-chip cooling brings the cooling mechanism right to the source of the heat.

How it Works in Practice

Cold Plates and Heat Transfer

Cold plates are custom-designed blocks that securely attach to the CPU, GPU, or other high-heat components. These plates have channels through which the liquid coolant flows. As the warm liquid passes through, it picks up the heat directly from the chip. This heated liquid then travels to a radiator or heat exchanger to shed its thermal load before recirculating.

The Advantages for Density

By tackling heat at its origin, direct-to-chip solutions eliminate the need for large, high-speed fans within individual servers. This reduction in internal components means server chassis can be made smaller, lighter, and more streamlined. You can then mount more of these streamlined servers side-by-side or even in denser configurations within a rack, leading to a significant reduction in overall rack count and therefore facility footprint.

Immersion Cooling: Drowning Your Servers (Safely!)

If you want to talk about radical footprint reduction, immersion cooling takes the cake. This is where entire server components or even whole servers are submerged into a non-conductive dielectric fluid.

Single-Phase vs. Two-Phase Immersion

Single-Phase Immersion: The Gentle Submergence

In single-phase immersion, the fluid circulates but doesn’t boil.

It’s kept below its boiling point, acting as a highly efficient heat transfer medium. The warmed fluid is then pumped out to a heat exchanger. This method is simpler in terms of fluid management.

Two-Phase Immersion: Leveraging Boiling Power

Two-phase immersion takes it a step further.

The fluid is allowed to boil directly on the surface of the heat-generating components. The phase change from liquid to vapor is an incredibly efficient way to absorb heat. The vapor then rises, condenses into a liquid on a cooling coil at the top of the tank, and drips back down.

This process is continuous and highly effective.

The Footprint Implications of Submersion

The beauty of immersion cooling from a footprint perspective is the elimination of almost all internal server fans and complex air ducting. The fluid itself acts as the cooling medium, and the tanks can be designed to be quite compact, holding multiple components or servers in a relatively small volume. This allows for incredibly high power densities within a given rack space.

You’re no longer limited by airflow dynamics; you’re limited by the fluid’s thermal properties and tank design.

Integrating Radiators and Heat Exchangers: Where the Heat Goes

No matter the liquid cooling method, the heat has to go somewhere. This is where radiators and heat exchangers come into play, and their design and placement are crucial for minimizing the overall footprint.

Traditional Rack-Mounted Radiators

Location, Location, Location

These are often mounted at the rear of server racks. They use fans to push ambient air through fins, transferring heat from the liquid inside the radiator to the air. While still a form of active cooling, these are often more efficient and quieter than massive HVAC systems required for air-cooled data centers.

Optimizing Airflow and Space

Strategically placing these radiators can consolidate heat exhaust zones, reducing the need for widespread air circulation throughout the entire data hall. This localized heat rejection can allow for tighter rack spacing in some scenarios, though ventilation around the radiators is still important.

Facility-Level Heat Rejection

Dry Coolers and Cooling Towers

For larger deployments, heat exchangers might be connected to what are known as dry coolers or cooling towers located outside the facility. These systems then dissipate the heat into the environment, typically using ambient air or water evaporation.

Reducing HVAC Demands

This offloads the bulk of the heat rejection away from the data hall itself, meaning less power is needed for internal HVAC and less physical space dedicated to large, redundant air conditioning units within the building. The footprint savings here are felt not just at the rack level, but at the facility infrastructure level.

In the quest for more efficient data centers, implementing liquid cooling systems has emerged as a pivotal strategy to reduce the high-performance computing footprints. These systems not only enhance cooling efficiency but also contribute to significant energy savings, making them an attractive option for businesses looking to optimize their operations. For those interested in exploring broader trends that can impact various industries, including technology and e-commerce, a related article discusses the top trends in the e-commerce business, which can be found here. This intersection of technology and market trends highlights the importance of innovation in maintaining competitive advantages.

Challenges and Considerations for Implementation

“`html

| Metrics | Before Liquid Cooling | After Liquid Cooling |

|---|---|---|

| Energy Consumption | High | Reduced |

| Footprint Size | Large | Reduced |

| Heat Dissipation | Inefficient | Improved |

| Performance | Stable | Enhanced |

“`

While the footprint reduction benefits are compelling, implementing liquid cooling isn’t entirely plug-and-play and comes with its own set of technical and logistical hurdles.

Fluid Management and Maintenance

Leak Detection and Prevention

This is often the first concern that comes to mind. While modern systems have robust safety features, the possibility of leaks, however small, is a real consideration. Implementing comprehensive leak detection systems, using high-quality fittings and tubing, and regular inspections are vital. The fluid itself can also be corrosive or degrade over time, requiring a maintenance schedule.

Fluid Compatibility and Cost

Not all liquids are created equal for cooling. Dielectric fluids are necessary to prevent short circuits, and these can be more expensive than simply using chilled water. Ensuring compatibility with all hardware components is also essential to avoid material degradation.

Infrastructure Modifications

Power and Plumbing

Liquid cooling systems require dedicated power for pumps, and depending on the setup, may require plumbing for water supply to heat exchangers or for drain/fill cycles. This means planning for these additions to your existing power and water infrastructure.

Space for Ancillary Equipment

While the servers themselves might take up less space, you’ll need dedicated areas for pumps, reservoirs, heat exchangers, and fluid storage. Careful planning is needed to ensure these components are located accessibly for maintenance without consuming excessive floor space in prime computing areas.

The Shift in Operational Expertise

Training Your Team

Your IT and facilities teams will need to be trained on the specifics of the liquid cooling technology being implemented. This includes understanding the system’s operation, troubleshooting common issues, and performing preventative maintenance. The skill set required will evolve from air-handling specialists to liquid cooling technicians.

Designing for the Future

The move to liquid cooling often involves rethinking the entire data center design. This isn’t just about swapping out components; it’s about embracing a new paradigm for thermal management that unlocks greater density and, consequently, a smaller overall footprint for your HPC operations. By embracing these technologies, you can build more efficient, more powerful, and more compact computing environments.

FAQs

What are liquid cooling systems for high-performance computing?

Liquid cooling systems for high-performance computing are a method of cooling electronic components by transferring heat away using a liquid coolant. This method is more efficient than traditional air cooling and can help reduce the footprint of high-performance computing systems.

How do liquid cooling systems reduce the footprint of high-performance computing systems?

Liquid cooling systems reduce the footprint of high-performance computing systems by allowing for more densely packed components, as they do not require as much space for air circulation. This can lead to more efficient use of space in data centers and other computing facilities.

What are the benefits of implementing liquid cooling systems for high-performance computing?

The benefits of implementing liquid cooling systems for high-performance computing include improved energy efficiency, reduced operating costs, increased reliability, and the ability to overclock components for higher performance. Additionally, liquid cooling systems can help extend the lifespan of electronic components.

Are there any drawbacks to using liquid cooling systems for high-performance computing?

While liquid cooling systems offer many benefits, there are some drawbacks to consider. These include the potential for leaks, the need for regular maintenance, and the initial cost of implementing the system. Additionally, liquid cooling systems may require additional expertise to install and maintain.

What are some examples of liquid cooling systems for high-performance computing?

Some examples of liquid cooling systems for high-performance computing include closed-loop systems, open-loop systems, and immersion cooling systems. Closed-loop systems use a sealed loop to transfer heat away from components, while open-loop systems involve circulating the liquid coolant through a heat exchanger. Immersion cooling systems submerge components directly in a non-conductive liquid coolant.