Hardware ray tracing, a rendering technique that simulates the physical behavior of light, has recently begun to appear in mobile chip architectures. This development promises to bring more realistic lighting, reflections, and shadows to mobile gaming and augmented reality applications.

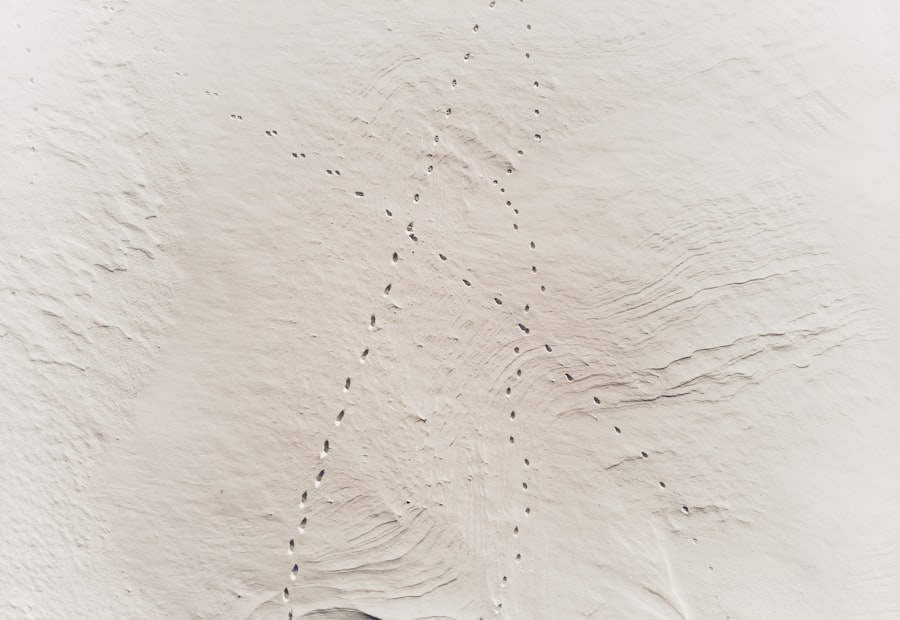

Ray tracing is a rendering algorithm that generates an image by tracing the path of light rays as they interact with objects in a scene. Unlike traditional rasterization, which projects 3D objects onto a 2D screen, ray tracing simulates light from the perspective of a virtual camera. Each pixel on the screen corresponds to a ray that is cast into the scene. When this ray intersects an object, subsequent secondary rays are generated to account for reflections, refractions, and shadows.

Ray Generation

The process begins with the camera, acting as the origin for primary rays. For each pixel in the final image, a ray is generated and directed into the virtual scene. This ray, often called a camera ray or eye ray, traverses the scene to determine which objects it intersects. The direction of these rays is dependent on the camera’s field of view (FOV) and its position and orientation in the 3D space.

Intersection Testing

Once a ray is generated, its fundamental task is to determine what objects it collides with first. This is the intersection testing phase. For every ray, a geometric intersection algorithm is performed against all objects in the scene. To optimize this, various spatial data structures are employed, such as Bounding Volume Hierarchies (BVHs) or Octrees. These structures allow the renderer to quickly discard large portions of the scene where no intersection is possible, significantly reducing the number of explicit intersection calculations.

Shading and Secondary Rays

Upon detecting an intersection, the properties of the intersected object’s surface are evaluated. This shading process determines the color and brightness of that point. The shader considers factors like the material’s color, texture, and how it interacts with light sources. Critically, to account for global illumination effects, secondary rays are often generated from the intersection point.

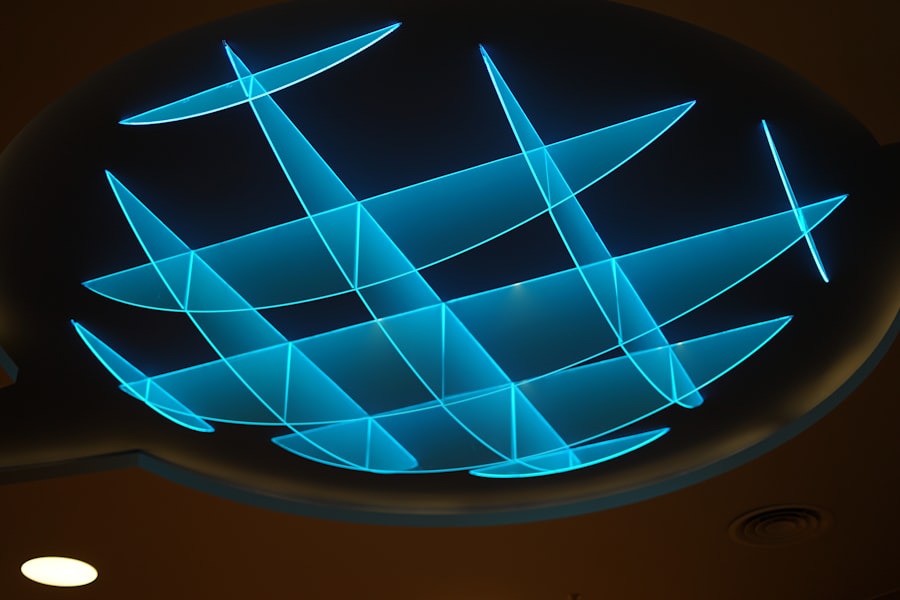

Reflection Rays

If the surface is reflective, a reflection ray is cast into the scene. This ray bounces off the surface according to the law of reflection and continues its journey until it hits another object or exits the scene. The color contribution from this reflection ray is then incorporated into the final pixel color.

Refraction Rays

For transparent or translucent materials, refraction rays are generated. These rays pass through the object, bending according to Snell’s Law, simulating how light behaves when passing through different media. The color and properties of objects seen through the refracting material are then integrated.

Shadow Rays

To determine if a point on a surface is illuminated by a light source, shadow rays are cast from that point towards each light source. If a shadow ray intersects an object before reaching the light source, the point is considered to be in shadow from that particular light, and its contribution to the final illumination is diminished or removed entirely.

As the demand for advanced graphics rendering continues to grow, hardware ray tracing on mobile chips has become a hot topic in the tech industry. This technology allows for more realistic lighting and shadow effects in mobile gaming and applications, enhancing the overall visual experience. For those interested in exploring the best devices that can leverage such capabilities, you can check out this related article on the best laptops for Blender in 2023, which discusses top picks and reviews for powerful machines suitable for graphic-intensive tasks. For more information, visit here.

The Challenges of Mobile Ray Tracing

Despite its visual fidelity, ray tracing is computationally intensive. Historically, it has been confined to offline rendering for films and high-end desktop GPUs. Porting this technology to the constrained power and thermal envelopes of mobile devices presents a unique set of challenges.

Power Consumption

The most significant hurdle for mobile ray tracing is power consumption. Ray tracing involves a high number of complex calculations per pixel, leading to increased demand on the processing units. On a mobile device, this translates directly to shorter battery life and potential thermal throttling, where the chip reduces its performance to prevent overheating. Efficient algorithms and specialized hardware are crucial to mitigate this.

Thermal Management

Closely related to power consumption is thermal management. Sustained high computational loads generate substantial heat. Mobile devices, with their compact form factors, have limited capacity for heat dissipation. Excessive heat can degrade performance, reduce component lifespan, and make the device uncomfortable to hold. Designers must find a balance between performance and the ability to shed heat effectively.

Performance Constraints

Mobile chips, while powerful for their size, operate under significantly tighter performance budgets than their desktop counterparts. They possess fewer computational units, lower clock speeds, and often share memory bandwidth with other system components. Achieving interactive frame rates with ray tracing on these platforms requires novel approaches to accelerate ray-scene intersection, shading, and the generation of secondary rays.

Memory Bandwidth

Ray tracing can be a memory-hungry process. Scene data, including geometry, textures, and material properties, must be readily accessible. The generation and processing of potentially many rays per pixel can lead to numerous memory accesses. Mobile devices typically have less memory bandwidth compared to desktop PCs, creating a bottleneck if data is not managed efficiently. Techniques like compact scene representations and on-chip caches become essential.

Hardware Accelerators for Mobile Ray Tracing

To overcome these challenges, mobile chip designers are integrating dedicated hardware components specifically designed to accelerate ray tracing operations. These “fixed-function” blocks offload compute-intensive tasks from the general-purpose shader cores, leading to significant performance and power efficiency gains.

Bounding Volume Hierarchy (BVH) Traversal Units

The BVH is a tree-like data structure that organizes objects in a 3D scene hierarchically. When a ray enters the scene, instead of testing it against every single object, it is tested against the bounding volumes at the top of the BVH. If it intersects a bounding volume, it then proceeds to test against its children’s bounding volumes, and so on, until it finds the closest intersection with an actual primitive.

Functionality

Dedicated BVH traversal units are logic circuits optimized to rapidly navigate these tree structures. They can perform multiple ray-box intersection tests concurrently and identify the next bounding volume to traverse in the hierarchy. This significantly reduces the number of full ray-primitive intersection tests.

Efficiency Benefits

By offloading BVH traversal from general-purpose shader cores, these units free up those cores for other rendering tasks. This leads to reduced power consumption as specialized hardware is more efficient at specific tasks than general-purpose programmable units. It also improves overall rendering throughput, allowing more rays to be processed per unit of time.

Ray-Triangle Intersection Units

At the lowest level of the BVH, when a ray reaches a leaf node containing a primitive (typically a triangle), a precise ray-triangle intersection test must be performed. This involves mathematical calculations to determine if and where a ray intersects the triangle.

Dedicated Hardware

Hardware ray-triangle intersection units are specialized computational units designed to execute these specific geometric computations with high throughput. They can often perform several ray-triangle intersection tests in parallel, further accelerating the process.

Parallel Processing

The ability to perform these intersections in parallel is critical. As rays are traced, many will eventually hit primitives. Having dedicated hardware for this crucial step prevents bottlenecks that would otherwise occur if general-purpose cores were solely responsible for this.

Software Optimization and APIs

Hardware acceleration alone is insufficient. Software must be optimized to leverage these new capabilities effectively. Graphics APIs play a crucial role in exposing hardware features to developers, while sophisticated rendering techniques can further enhance efficiency.

Graphics APIs (Vulkan, DirectX)

Modern graphics APIs like Vulkan and DirectX 12 have introduced extensions and features specifically designed to expose hardware ray tracing capabilities to developers. These APIs provide an interface for creating and managing acceleration structures (like BVHs), dispatching ray generation shaders, and gathering intersection results.

Vulkan Ray Tracing Extension

The Vulkan Ray Tracing extension, a cross-platform API, provides explicit control over ray tracing pipelines. Developers can define ray generation shaders, intersection shaders, closest hit shaders, any hit shaders, and miss shaders, offering fine-grained control over the ray tracing process. This flexibility allows for complex rendering effects and custom lighting models.

DirectX Raytracing (DXR)

DirectX Raytracing (DXR), exclusive to Windows platforms, offers a similar level of control. It integrates ray tracing capabilities directly into the DirectX 12 ecosystem, making it accessible to game developers already familiar with the API. DXR also utilizes various shader types (ray generation, intersection, closest hit, any hit, miss) to manage ray behavior and surface interactions.

Denoising Techniques

Even with hardware acceleration, generating enough ray samples per pixel to achieve a completely noise-free image in real-time can be prohibitively expensive on mobile devices. Denoising algorithms are essential for achieving acceptable visual quality with fewer samples.

Machine Learning-Powered Denoisers

Many modern denoising solutions leverage machine learning. Neural networks are trained on large datasets of noisy and clean-rendered images. During runtime, these networks analyze the noisy ray-traced output and intelligently remove artifacts while preserving important details. This allows developers to render with a significantly lower number of rays, reducing computational cost, and then rely on the denoiser to reconstruct a high-quality image. These denoisers often run on specialized AI accelerators present in modern mobile SoCs.

Temporal Accumulation

Temporal accumulation involves reusing information from previous frames to enhance the current frame’s quality. By jittering ray origins slightly over time and accumulating the results, denoisers can construct a more complete picture over several frames, effectively increasing the sample count without increasing the per-frame computational load. This technique is particularly effective in static or slow-moving scenes.

As the demand for high-quality graphics in mobile gaming continues to rise, hardware ray tracing on mobile chips is becoming an increasingly important topic. This technology allows for more realistic lighting and shadows, enhancing the overall visual experience on smartphones and tablets. For those interested in exploring the best devices that can take advantage of such advancements, you might find this article on the best tablets for students in 2023 particularly insightful, as it highlights devices that not only cater to educational needs but also support advanced graphics capabilities.

The Future of Mobile Ray Tracing

| Mobile Chipset | Manufacturer | Ray Tracing Support | Ray Tracing Cores | Performance (FPS in Ray Tracing Enabled Games) | Power Consumption (W) | Release Year |

|---|---|---|---|---|---|---|

| Snapdragon 8 Gen 2 | Qualcomm | Hardware Accelerated | Dedicated RT Cores | 30-45 FPS (High Settings) | 5-7 W | 2023 |

| Apple A17 Pro | Apple | Hardware Accelerated | Integrated Ray Tracing Units | 40-50 FPS (High Settings) | 4-6 W | 2023 |

| Exynos 2200 | Samsung | Hardware Accelerated | AMD RDNA 2-based RT Cores | 25-40 FPS (Medium-High Settings) | 6-8 W | 2022 |

| MediaTek Dimensity 9200+ | MediaTek | Hardware Accelerated | Integrated RT Units | 20-35 FPS (Medium Settings) | 5-7 W | 2023 |

| Kirin 9000S | Huawei | Partial Hardware Support | Limited RT Acceleration | 15-25 FPS (Low-Medium Settings) | 5-6 W | 2022 |

The integration of hardware ray tracing into mobile chips marks a significant step towards closing the visual fidelity gap between mobile and desktop platforms. While current implementations are foundational, future advancements will likely unlock its full potential.

Enhanced Visual Fidelity

As hardware capabilities improve and software optimizations mature, mobile games and applications will exhibit increasingly realistic lighting, reflections, and shadows. Imagine a mobile game where every surface reflects its environment accurately, where light bounces naturally creating soft, diffuse illumination, and where shadows are not just approximations but precise representations of light occlusion. This will lead to more immersive and believable virtual worlds.

Augmented Reality (AR) and Virtual Reality (VR)

Ray tracing has profound implications for AR and VR. In AR, accurate light estimation and the ability to seamlessly integrate virtual objects into real-world environments depend heavily on how light interacts with both. Ray tracing can enable virtual objects to cast realistic shadows onto real surfaces and to correctly reflect real-world surroundings, making them appear truly present. For VR, enhanced realism contributes significantly to immersion, reducing the tendency for users to perceive the virtual environment as an artificial construct.

Real-Time Global Illumination

One of the holy grails of real-time rendering is full global illumination, where light bounces multiple times around a scene, illuminating indirectly lit areas. While challenging even on high-end desktop GPUs, hardware ray tracing brings real-time global illumination closer to reality for mobile platforms. This will lead to environments with a much greater sense of depth and atmosphere, where light truly feels like it is a part of the scene rather than an artificial overlay.

New Game Design Opportunities

The availability of advanced rendering techniques often inspires new forms of game design. Developers will be able to create puzzles that involve manipulating light and shadows, narratives woven around realistic reflections, or environmental effects that rely on complex light interactions. This shift in rendering capability is not just about making existing things look better, but about enabling entirely new gameplay experiences.

In summary, the journey of hardware ray tracing to mobile chips is a complex dance between engineering ingenuity and computational constraints. As the technology matures, it promises to redefine visual experiences on handheld devices, bringing a new dimension of realism and immersion to our pockets.

FAQs

What is hardware ray tracing on mobile chips?

Hardware ray tracing on mobile chips refers to the integration of specialized processing units within mobile device processors that accelerate ray tracing calculations. This technology enables more realistic lighting, shadows, and reflections in graphics by simulating the physical behavior of light in real time.

How does hardware ray tracing improve graphics on mobile devices?

Hardware ray tracing enhances graphics by providing more accurate and dynamic lighting effects, such as reflections, refractions, and shadows. This results in more immersive and visually appealing images and games on mobile devices compared to traditional rasterization techniques.

Which mobile chip manufacturers support hardware ray tracing?

Several leading mobile chip manufacturers, including Qualcomm with its Snapdragon series and ARM with its Mali GPUs, have introduced hardware ray tracing capabilities in their latest mobile processors. Additionally, companies like Apple and MediaTek are exploring or implementing ray tracing support in their mobile chipsets.

Does hardware ray tracing significantly impact battery life on mobile devices?

Hardware ray tracing can increase power consumption due to the additional processing required for complex lighting calculations. However, mobile chips with dedicated ray tracing hardware are designed to optimize performance and energy efficiency, minimizing the impact on battery life compared to software-based ray tracing.

Are there any mobile games or applications that currently use hardware ray tracing?

Yes, some mobile games and applications have started to incorporate hardware ray tracing to enhance visual quality. Titles optimized for devices with ray tracing-capable chips showcase improved lighting and reflections, although widespread adoption is still growing as the technology becomes more common in mobile hardware.